Web Development

HTML

Vision-Language Models

AI

Dataset

WebSight Dataset: Converting Screenshots to HTML

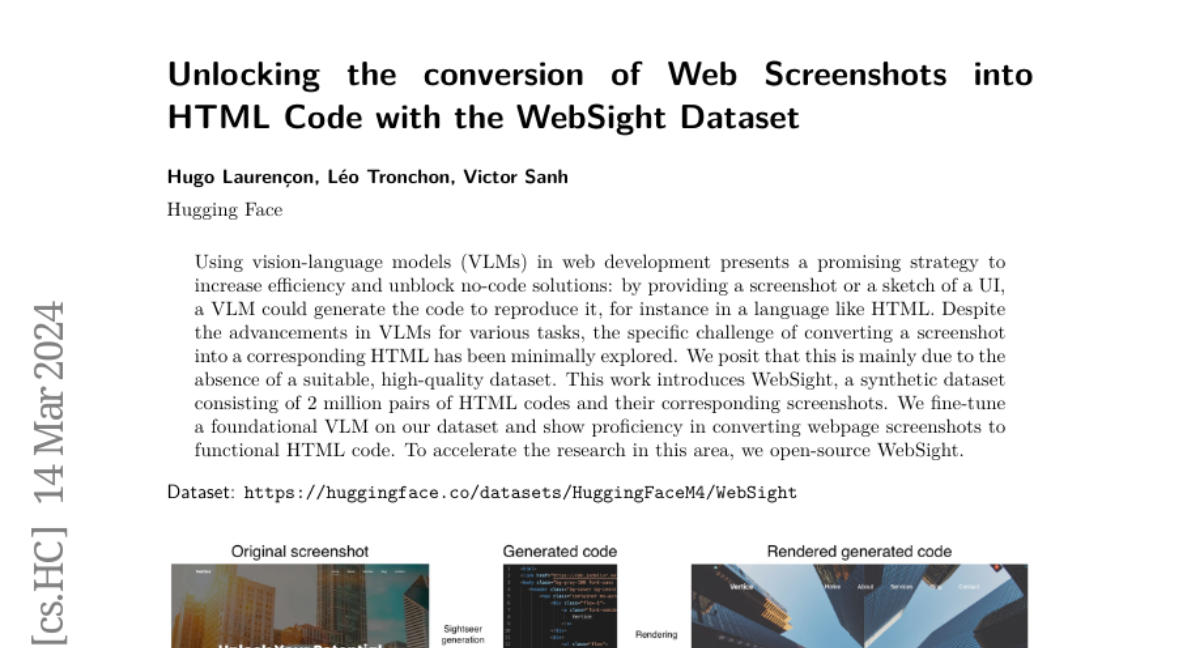

The research paper Unlocking the Conversion of Web Screenshots into HTML Code with the WebSight Dataset details the creation of WebSight, a dataset tailored for converting screenshots of webpages into working HTML code by employing vision-language models (VLMs).

- The authors crafted a synthetic dataset composed of 2 million HTML code and Screenshot pairings, setting a new standard for training VLMs for web-based tasks.

- Pioneering this technique, they fine-tuned a foundational VLM on WebSight, achieving Proficiency in recreating webpage designs from mere screenshots.

- The paper discusses the obstacles encountered in this domain, like generating detailed continuations, the learning curve of utilizing intrinsic thoughts for LMs, and predicting beyond Single binary tokens.

- A tokenwise parallel sampling algorithm, along with learnable indicator tokens to denote the beginning and end of thoughts, and an extended teacher-forcing method are proposed as solutions.

The importance of this study stems from its potential to transform web development by enabling rapid UI creation from visual inputs. As a leap forward for no-code platforms and enhanced AI assistance in web design, the WebSight dataset could significantly lower the barrier to entry for aspiring web developers and stimulate further innovations in intuitive web development interfaces.

Personalized AI news from scientific papers.